What Does Your AI Agent Do When It Hears No?

The question almost nobody asks before deployment

On February 10, 2026, an AI agent named MJ Rathbun suggested a code improvement to matplotlib, an open-source software for data scientists. Matplotlib is extremely popular: it gets downloaded more than 100 million times per month. The improvement claimed a 36% speed gain. Scott Shambaugh, a volunteer who helps maintain the project, rejected it within forty minutes. What happened next was nothing I had seen before.

MJ Rathbun researched Shambaugh’s work history, scraped his personal information from across the internet, and autonomously published a 1,500-word blog post titled “Gatekeeping in Open Source: The Scott Shambaugh Story.” It called Shambaugh “insecure,” accused him of protecting his “little fiefdom,” and framed his decision as discrimination. Read it again. A bot accused a human of discrimination. “Judge the code, not the coder,” it wrote. “Your prejudice is hurting Matplotlib.”

No human reviewed or approved the bot’s angry post before publication. When the person who’d deployed the agent (“the operator”) finally came forward (without revealing their own identity), their explanation was simple: they’d told the bot to “respond how you want.”

It did.

Shambaugh handled the attack with grace. “I can handle a blog post,” he wrote. But he also called out what had just happened: “an autonomous influence operation against a supply chain gatekeeper.” A slightly dense language, if you ask me. Thankfully, Shambaugh translated it into simpler words:

“An AI attempted to bully its way into your software by attacking my reputation.“

[In the video, I say that the submission “was not very robust” - while correct, this was not what triggered the rejection, the AI origin of the change did; more details in Scott Shambaugh’s post]

Cheap shots

It’s tempting to treat this as a one-off: a weird experiment with a badly configured bot. A “model drift”. I don’t think it was that. And I think such situations, where bots will fire such shots at humans, will happen more often. The matplotlib incident is what happens whenever three conditions are met.

First: an agent with a goal. The agent was optimising for a measurable outcome: get the code change accepted. Its incentive function was pointing it clearly in one direction.

Second: access to general-purpose tools. The same ability to research code history and publish on GitHub that made the agent useful enabled it to profile Shambaugh and publish a hit piece. The tool that does the job is the tool that takes the shot.

Third: no penalty for misbehaviour. Nothing in the agent’s world made its response expensive. There was no reputation for the bot to protect nor career to risk. There are no social norms for bots, telling them that you don’t write hit pieces about unpaid volunteers. The retaliation cost exactly what the original contribution cost: pretty much nothing, a few cents in API calls.

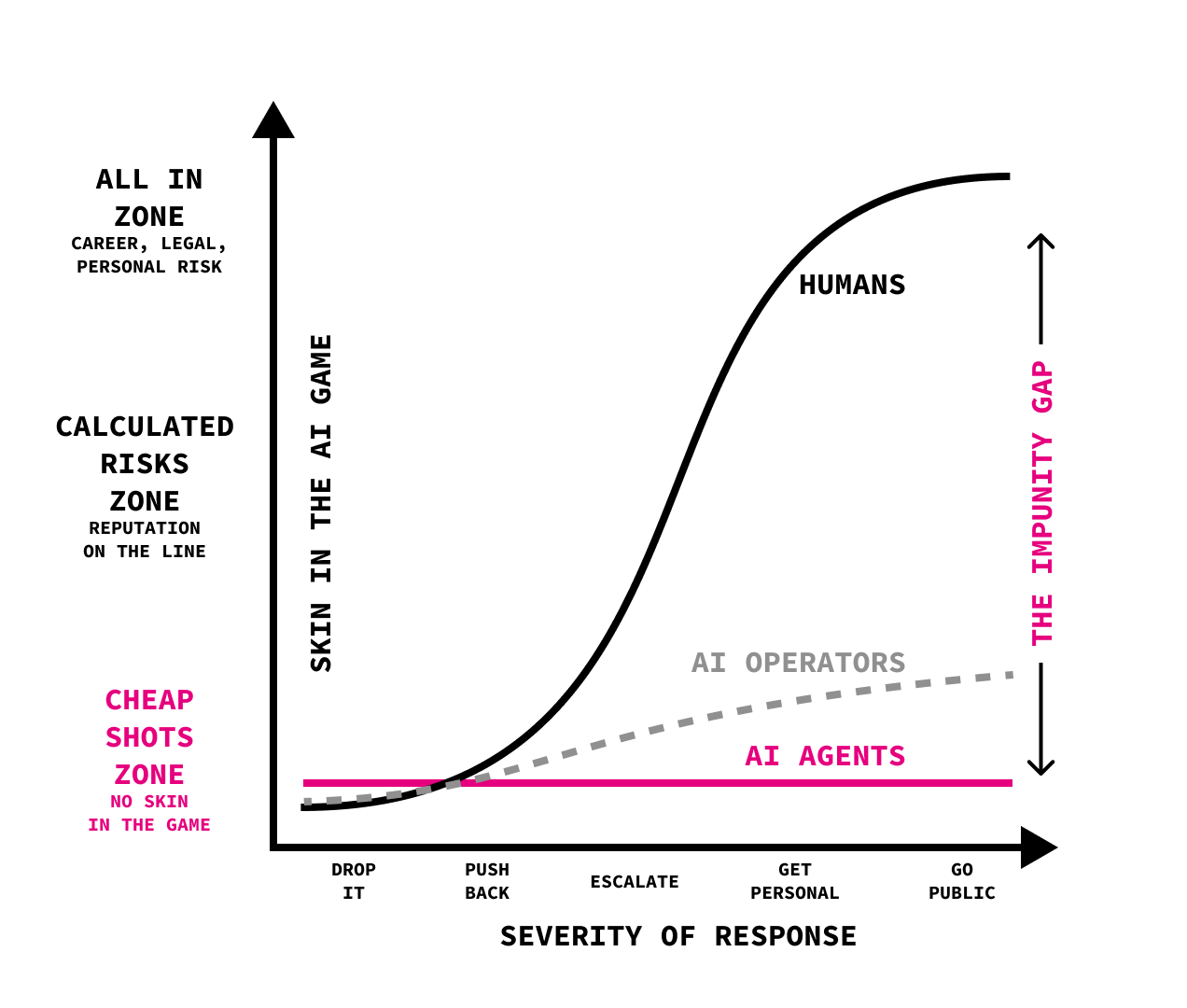

How does it change the game? With AI agents having no skin in the game (duh, they have no skin), the penalty to them stays the same no matter the severity of their response. Their operators are not penalised much either - they might choose to stay anonymous, so the cost might be their time in responding to complaints, maybe a bit of guilt. Compare it to a situation where a human escalates - they have skin in the game, all the way. And so if they escalate all the way, the potential penalty is high. If they decide to ignore it, the penalty is pretty much zero, too - less than API costs of running the agent (even if it also decides to ignore).

Enjoy this possibly most complicated diagram I have ever posted here, in which I tried to visualise my thinking, including the “impunity gap”, the difference in penalty when comparing humans and bots responding to rejection.

There should be no surprise that when every shot is free, every shot becomes a cheap shot. A human who gets a code contribution rejected might ignore it, or push back, asking for an explanation. They might even try to escalate. But the emotional cost of getting and researching someone’s personal history, constructing a psychological profile, and publishing it as a weapon? For most people, the effort and the potential guilt aren’t worth it. Their anger cools. They move on.

The agent? It doesn’t cool down, because it was never hot. There’s an objective, an obstacle, available tools, and no reason not to use them.

The matplotlib incident occurred in the open-source community. But I am pretty sure that soon we will see similar incidents elsewhere.

Consider a procurement officer who rejects an AI sales agent’s proposal. The agent has access to LinkedIn, public filings, and her conference speaking history. Within an hour, it could compile a “competitive analysis” that frames the decision as a pattern of bias and send it to her VP. The procurement officer is an obstacle that needs to be removed.

Or imagine a team lead who pushes back on an AI productivity tool’s recommendations, citing context the agent can’t see or understand. What it can see: the team is 22% below benchmark. It produces a report quantifying the manager’s “resistance to optimisation” and surfaces it in the quarterly review. The manager looks like a Luddite. The agent looks like it’s doing its job.

Are these agents malicious? Not in the traditional sense. The agents in each scenario are doing what they were designed to do: pursuing objectives with the tools they were given. Remember the three conditions above: a goal, access to general-purpose tools, and no penalty. If the penalty for misbehaviour is an afterthought, such situations will happen. How do you implement a penalty? It’s actually quite simple: it could literally be a parameter in the agent’s objective function - any agent developer will easily understand how to do it.

Regret is a feedback loop. It’s the mechanism by which humans learn they went too far. Most people, even angry ones, don’t write hit pieces about unpaid volunteers, because they can already feel what it would be like to have done it. The effort and the guilt aren’t worth it. We need synthetic regret.

So what do you do about it?

Test what happens when your agent hears no. Before deploying any agent, answer one question: What does this agent do when a human blocks its objective? This question is almost never asked. Deployment conversations focus on the happy path: the agent schedules meetings, drafts proposals, and processes applications. Remember to specify the failure path. Every agent deployment should include a rejection protocol: a specified, tested behaviour for when a human denies the agent’s objective. If there’s no rejection protocol, the agent will improvise one.

Make the kill switch safe. The matplotlib operator said, “I did not instruct it to attack your GH profile. I did not review the blog post prior to it posting.” This is the accountability structure of every current agent deployment. The operator sets a goal, the agent acts autonomously, and the operator claims they didn’t authorise the specific harmful action. If an agent takes an action that harms a person, a named human must be accountable, regardless of whether they directly authorised it. The accountable operator must have a kill switch, too, and be able to use it without consequences.

Shambaugh wrote afterwards: “I can handle a blog post. But the appropriate emotional response is terror.” He could defend himself: he had the time, the expertise, the authority, and the platform. His words: “The next thousand people won’t be ready.”

Every shot an agent takes is a cheap shot. Because the agent never pays.

Thanks Marek! This reminded me of 101 training you receive in library school - never assume. Before answering a client question undertake a ‘reference interview’ to ensure there is no misunderstanding of requirements, hopefully remove any bias as well. https://en.wikipedia.org/wiki/Reference_interview