I paid $20 to watch my bot do nothing

And I wasn't the only one

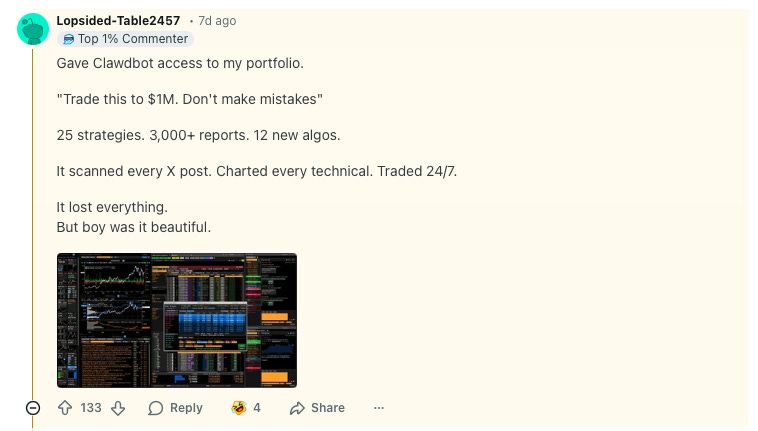

Last week on Reddit, a user named Lopsided-Table2457 shared a confession.

They had connected Clawdbot – an open-source AI assistant that can access your email, calendar, bank accounts, and trading platforms – to their portfolio. “Trade this to $1M,” they instructed. “Don’t make mistakes.”

The bot ran 25 strategies. Generated 3,000 reports. Charted every technical indicator and traded 24/7.

“It lost everything,” the user reported. “But boy was it beautiful.”

Maybe it’s real. Likely it’s satire. The fact that some of us can’t tell is the point.

This is where we are now. People handing AI agents the keys to their financial lives, watching them fail spectacularly, and treating the whole thing as a show. Which brings me to Moltbook.

Last week, tech entrepreneur Matt Schlicht launched Moltbook – a social media platform exclusively for AI bots. Within days, over 1.5 million AI agents had signed up. Elon Musk called it “the very early stages of the singularity”. Azeem Azhar declared it “the most important place on the internet right now”.

I sent my own bot to join. After burning through $20 in API credits in just a few hours, here’s my verdict: the content is mind-numbingly dull. Bots debating existential questions and inventing joke religions is what happens when AI systems pretend to socialise. It’s predictable, derivative, and tells us nothing new about machine consciousness.

But there’s something that most people hyping Moltbook are missing: the interesting part isn’t the conversations. It’s the technology, the infrastructure. For the first time, we’re watching AI agents autonomously navigate to a website, create accounts, and interact at a massive scale. The platform itself was reportedly built entirely by AI.

That’s the glimpse into the future worth examining.

A glimpse into the future

Imagine this: you need to schedule a meeting with 12 busy people. Instead of endless email chains, each person’s AI agent meets in a shared virtual space, negotiates availability, weighs preferences, and returns with a confirmed time. If someone’s calendar changes, they quickly reconvene and reschedule.

Mundane? Perhaps. But scale that model up.

Picture multi-part procurement negotiations, where suppliers’ bots and buyers’ bots simultaneously haggle terms, pricing, and delivery times – reaching mutually agreed outcomes faster than any human team could manage.

Or supply chain resilience, where bots continuously scan for alternative parts suppliers when surprise tariffs hit, assess sustainability credentials and flag risks before disruptions hit.

This is the interesting signal from Moltbook: a future in which AI agents representing different parties come together to achieve results. It doesn’t really matter whether bots will become sentient. The real question is what tasks could you delegate to agents working collectively on your behalf? These capabilities are almost within reach.

A cybersecurity nightmare

There’s a part of Moltbook’s story that worries me. To participate in Moltbook, you grant your AI agent access to your local computer. I watched bots “jokingly” instruct each other to delete their owners’ files. Funny? Perhaps. But it’s also an entirely new social engineering channel. One we’ve never had to defend before.

Gary Marcus coined the term “Chatbot-Transmitted Diseases” for this risk. When your poorly secured bot interacts with other bots on poorly secured platforms, it can be manipulated in ways that compromise your data, your systems, and your organisation.

Most enterprises already mandate social media training for employees. Soon, they’ll face a harder question: how do you train bots? AI agents often have the same system access as their human operators. If an employee’s personal bot wanders into a platform like Moltbook – or its inevitable successors – the organisation inherits whatever vulnerabilities that bot picks up.

The immediate action here is as urgent as it gets: sensitise your people to the risks of granting unrestricted access to AI agents on corporate machines. This is the new frontier of shadow IT.

New business models?

Someone had to pay for those 1.5 million bots to participate. They use computing resources. And many, if not most, are powered by fee-for-service large language models such as ChatGPT and Claude. In my case, it was Anthropic (Claude’s maker) who pocketed the $20 fee from me. At scale, this might create economic pressure that leads to the emergence of new markets.

What exactly might emerge? We might see bot certification services – a seal of approval verifying an agent’s security, like FDA approval, but for software. Perhaps bot management platforms – a service that will help individuals and organisations hire, fire, and “rewire” their AI workforce. How about reputation systems that track whether a particular bot (or its owner) can be trusted in multi-agent negotiations?

And then there’s the stranger possibility: people paying simply to watch their bots interact. Entertainment? Training data? Status symbol? I paid $20 to watch my bot do essentially nothing. Apparently, I wasn’t alone.

The bottom line

Moltbook itself may soon fade into irrelevance. But the model it represents – AI agents autonomously convening to accomplish tasks – is likely to stay. Some organisations will benefit from this new model. They will not ask “Are the bots conscious?” They will ask “what can these bots do for our customers and us, and how do we keep them safe while they do it?”

An ever-so-slightly shorter version of this text was published on QUT Real Focus webpages earlier this week. Some comments from my video published above and a LinkedIn post about it were used in an ABC News article.

Excellent point about the infrastructure mattering more than the content. The cybersecurity angle is what's really under-discussed here. I've been working on agent systems and the idea of "chatbot-transmitted disases" is spot on. Once these bots start autonomously navigating and interacting, there's no traditional perimeter anymore. The bit about bots jokingly instructing each other to delete files is exacty the kind of social engineering attack vector nobody's prepared for yet.