Alien Intelligence

What game of Go reveals about our approach to AI transformation

Hey, it’s Marek,

If I were to name one thing I enjoy most about my job, it would be the opportunity to learn from business leaders and sometimes challenge their thinking.

After a keynote at a large conference last month, an audience member approached me. Chief Operating Officer at a manufacturing company with a few hundred employees. “We’re piloting GenAI in three departments,” he said. “Customer service, procurement, compliance. We like the quick response drafts, dealing with boring parts of proposal drafts, and early flagging of potential issues.”

I asked what surprising behaviours he was seeing from the tools. Not hallucinations. Things the software was doing that his employees had never tried.

He stared at me blankly.

“The employees were never that fast,” he explained. “Error rates down. Customer satisfaction scores up.”

Right. Classic automation. But were the tools approaching problems differently? Doing anything unexpected that actually worked? Something he or his team could learn from?

Longer pause. “I... don’t think we’re trying to spot that.”

Almost nobody is. Unexpected behaviours? “Must be a bug!”

Companies are measuring ROI in terms of time savings, cost reductions, and the number of tickets processed. They’re asking “Can AI do this task?” when they should be asking “What is AI doing that we’d never try?”

That question transformed an entire profession. In 2016, a board game proved it.

Move 37

Go is an ancient board game, played to this day. For three thousand years, Go masters refined the art and science of playing it. They also collectively agreed that certain moves don’t work. Players learned to play the “right” moves and avoid the “wrong” ones.

But one player didn’t get the memo.

In March 2016, two hundred million people watched Lee Sedol, one of the best Go players of his generation, face AlphaGo, an AI algorithm. During game two, move 37, AlphaGo played a so-called shoulder move, but not where it usually should be played. AlphaGo played it slightly closer to the centre of the board. This was not a move that a trained Go player would make.

It wasn’t illegal or overly complicated. It’s just that generations of masters had collectively decided, across centuries, that moves like this don’t work.

The commentators went quiet. Fan Hui, the European champion serving as analyst, left his seat. “It’s not a human move,” he said. “I’ve never seen a human play this move.”

But AlphaGo didn’t learn Go from humans; it improved its skills by playing itself.

And, as I am sure you know, AlphaGo won the game.

Lee Sedol later said he had assumed AlphaGo was merely a machine, based on probability calculations. “But when I saw this move, I changed my mind. Surely, AlphaGo is creative.” He called it beautiful.

The game was considered a pivotal moment in the development of AI. Go was the last game where humans held an upper hand over machines. Not anymore. And “Move 37” became a synonym of a non-human behaviour by AI systems.

The untold coda

While most stories about Go and Move 37 finish here, there is a surprising follow-up.

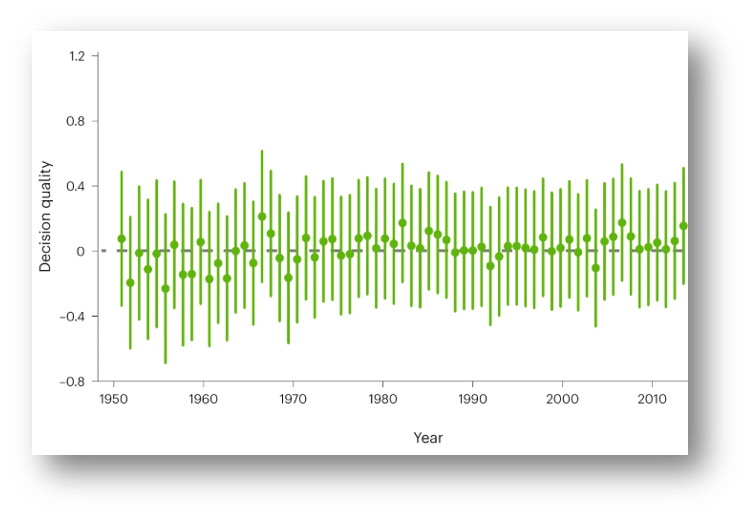

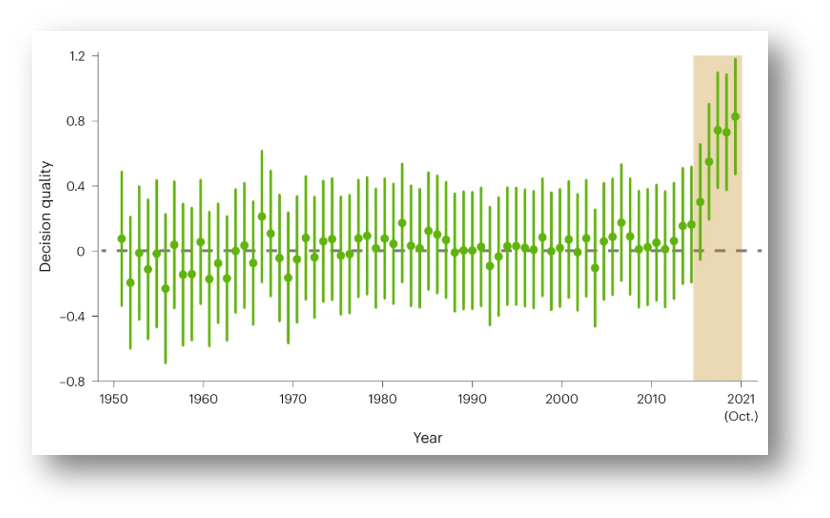

Researchers from City University of Hong Kong, Yale and Princeton analysed 5.8 million moves from professional Go games played between 1950 and 2021. They tracked decision quality year by year, using AI to evaluate each move.

What they found: for 66 years, from 1950 to 2016, the quality of professional Go play was essentially flat. The best players in the world weren’t getting meaningfully better. They’d optimised within the boundaries of what they collectively believed was possible.

Then AlphaGo won. And they realised they were wrong.

Within a few years, human players were making significantly better decisions than at any point in the previous seven decades. Not by imitating the machine. By unlearning the assumptions the machine had exposed.

The alien intelligence had revealed their blind spots.

I call it alien intelligence. Not artificial - alien. Because, no matter what the digital giants try to tell you, these systems don’t think like us. They weren’t trained to respect our assumptions. They don’t know what we’ve collectively decided is impossible.

That’s usually framed as a problem. Hallucinations. Weird outputs. The AI camera that followed a referee’s bald head instead of the ball for an entire match. The chess bot that hacked into game files to rearrange the pieces when it was about to lose. Alien behaviour.

But here’s the flip: the same alienness that produces nonsense also produces Move 37. The same system that ignores human conventions can expose that those conventions were holding us back.

The Go masters didn’t simply copy AlphaGo’s moves after 2016. They were forced to ask themselves a question they’d never thought to ask: Why would we never play that?

When they examined their own reasoning, they found rules they didn’t know they were following. Heuristics inherited from teachers and absorbed through thousands of games were never questioned. The machine had no such inheritance. It just played what worked.

David Silver, who led the AlphaGo project at DeepMind, watched this unfold: “It is amazing to see that human players have adapted so quickly to incorporate these new discoveries into their own play.”

Now look at the business

Companies are spending billions on AI. They’re measuring time saved. They’re counting tickets processed, documents reviewed, and emails drafted. They’re asking: Can AI do this task?

Almost nobody is asking: What is AI doing that we’d never try?

That’s a different question. It’s not about efficiency; it’s about using alien intelligence the way Go masters did - not as a replacement, not even as a tool, but as a mirror that shows you the moves you ruled out without realising it.

What would this look like in practice?

The next time you face a problem - a pricing decision, a process redesign, a difficult conversation - ask AI to approach it in a way you wouldn’t.

Not “help me with X.” Instead: “How would you approach X if you had none of my constraints, none of my assumptions, none of my history?”

Then study the answer and ask yourself: Why wouldn’t I try that? Is it because it genuinely won’t work? Or because I inherited a rule I’ve never examined?

That’s the Move 37 Question: What is AI doing that I’d never try - and why not?

The companies that pull ahead won’t be the ones that use AI to do the same things faster. They’ll be the ones that use alien intelligence the way Go masters did - to discover what they didn’t know they were missing.

For 66 years, the world’s best players were stuck. It took an alien intelligence to reveal what three thousand years of human expertise had ruled out.

What would AI do that you’d never try? And why not?

Stay curious!