80% of Your AI's Thinking May Be Theatre

New research on reasoning models, and what it means for your budget

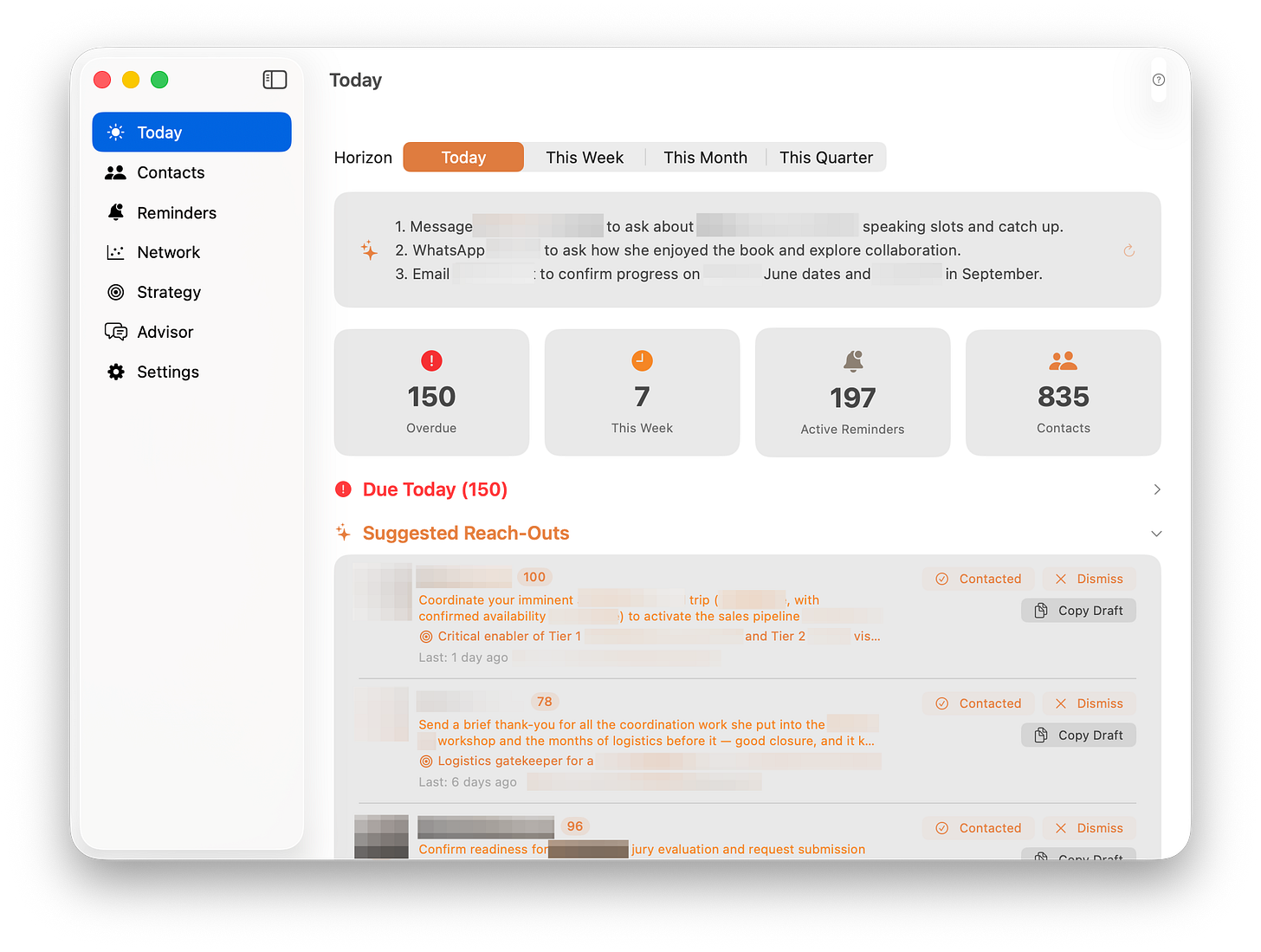

I have a personal CRM that I built myself. It uses large language models to analyse the data I have about my contacts: emails (the ones I am allowed to analyse), meeting notes, and public information. It then connects all of it to my goals and strategy (yes, I am one of these people who still remember their New Year’s resolutions in March). But because there’s a lot of data, running the most powerful models on every contact would likely cost me a small fortune.

So I made a practical choice: cheaper, faster models handle most contacts. For the few dozen that matter most, I have an option to flick a switch manually: upgrade them to the most capable model available. Plus extended thinking. Full power.

Yesterday, during a committee meeting in Canberra, I had 47 of these premium contacts updating on my screen. I watched the numbers tick. Each one grinding through extended reasoning, token by token. It felt like watching a taxi meter on the way home when I was a student - does it have to turn so quickly? (If the committee chair is reading this: I was paying full attention. The updating numbers were like a screensaver.)

But there’s a catch. After several weeks of running this system, I literally cannot tell the difference in quality between the expensive model and the cheap one. The analysis reads the same. The recommendations are just as useful. The only measurable differences? The premium model is slower and costs more.

I thought maybe I was imagining things.

I wasn’t.

Reasoning theatre

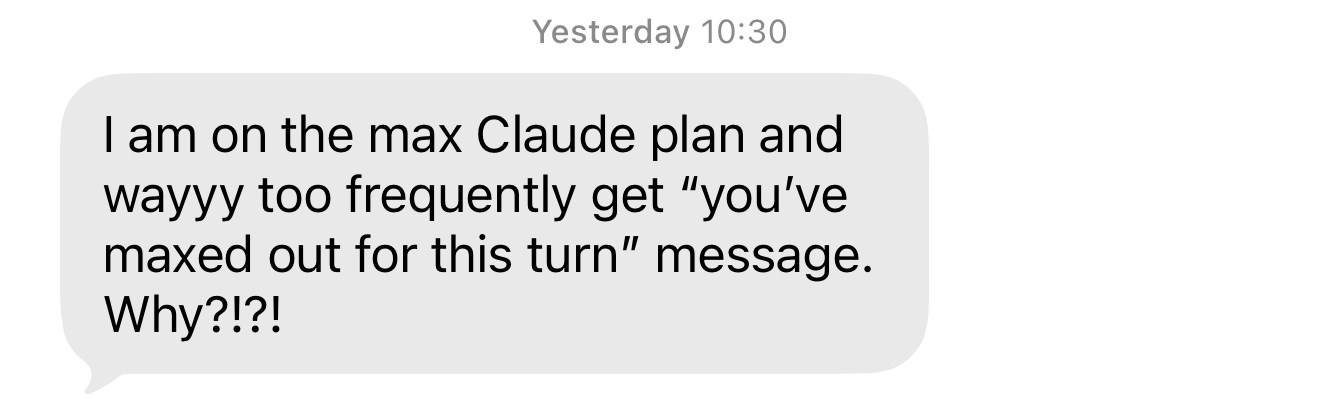

A colleague messaged me the other day, frustrated. They’re on the most expensive AI plan available and keep hitting usage limits. Why? Because every query they send triggers extended reasoning: the model consumes thousands of invisible “thinking” tokens before producing a few sentences of output. They’re paying for a process they have little control over.

And a growing body of research suggests that much of that reasoning may not be doing what we think it’s doing.

A paper published this week by Basu and Chakraborty tested 10 frontier models, including GPT-5.4, Claude Opus, and DeepSeek, across four task types. Their method was simple: remove one reasoning step at a time and check whether the final answer changes. For most models on most tasks, removing any single step changed the answer less than 17% of the time. Every individual step was sufficient on its own to reach the correct answer. No step was individually necessary.

The reasoning of these models looked real, read well… But it turns out it just wasn’t changing the answer.

A separate team at Goodfire AI and Harvard found a way to check what the model had already decided before it finished “reasoning.” They published their research earlier this month. On straightforward questions, the model’s internal confidence locked onto the correct answer almost immediately. Then it kept generating reasoning tokens anyway!

When the researchers forced the model to stop reasoning once it had already made up its mind, token use dropped by up to 80%, while accuracy remained comparable.

Read the previous paragraph again. That’s five times cheaper, just telling the model not to overthink it.

Researchers have started calling this phenomenon “Reasoning Theatre”: the model performing deliberation that it has already completed. The term comes from multiple independent research teams arriving at the same conclusion: on many everyday tasks, chain-of-thought reasoning is decorative rather than functional.

The Spin Cycle

In one of my favourite books, Factfulness, Hans Rosling told a story about his grandmother seeing a washing machine for the first time. She pulled up a chair and watched the entire cycle, mesmerised. And fair enough: the machine was doing real work that had consumed hours of her life every week.

I think many of us are doing the same thing with AI reasoning. We watch the “thinking” animation pulse. We see the token counter climb. We wait. And we feel, instinctively, that something important is happening in there.

I call this “The Spin Cycle”: the time, money, and attention we spend watching AI perform reasoning that doesn’t change the answer. Rosling’s grandmother watched a machine that was genuinely working. We might be watching one that’s already finished.

The economics are not trivial. Reasoning tokens are billed as output (the most expensive token category), and a model can generate thousands of them behind the scenes before producing a few visible sentences. If 80% of those tokens on routine tasks are performative, as the Goodfire research suggests, then most of what you’re paying for on everyday queries is the spin cycle.

It’s as if we measured driving performance by fuel burned rather than distance covered.

So what?

Here’s the contrarian flip: every time you dismiss a smaller, cheaper, or locally-run model because it “can’t reason as well,” ask yourself what you’re actually comparing. If the big model’s reasoning is largely decorative on your task, then a smaller model that skips the theatre might deliver the same answer, just without the performance. The speed difference might even cancel out: a fast model doing unnecessary reasoning versus a slower model going straight to the answer.

What to do when you’re back at work. Pick three tasks you regularly run on a reasoning model. Run the same tasks on a smaller or non-reasoning model. Compare the outputs side by side. If you can’t tell the difference, and I suspect that for most routine tasks you won’t, you’ve found your spin cycle.

If you manage an AI budget, ask your team a harder question: what percentage of our queries actually need extended reasoning? If nobody knows, that’s the first thing to fix. You might be funding a very expensive washing machine show.

The Basu & Chakraborty paper, “When AI Shows Its Work, Is It Actually Working?” is available at arXiv:2603.22816. The Goodfire/Harvard paper, “Reasoning Theater,” is at arXiv:2603.05488.

Fascinating. It sounds a bit like a person with lots of experience (tacit knowledge) who arrives at the solution to a problem immediately but need to back this up by reasoning so that others can follow.

But even though the solution is already known, people who are more educated and thus should know better are hired by companies to find a more sophisticated solution which in turn doesn’t differ so much from what the experienced person offered.

AI seems to behave in a similar manner. Not surprising if we consider it’s been trained by the content humans created.